We believe data should drive innovation, not fuel fear. Security exists to enable growth, not slow it down.

With advanced data security for AI, your team can safely leverage artificial intelligence. Discover how to secure your data from AI with Fortra.

Granular AI Data Protection Controls

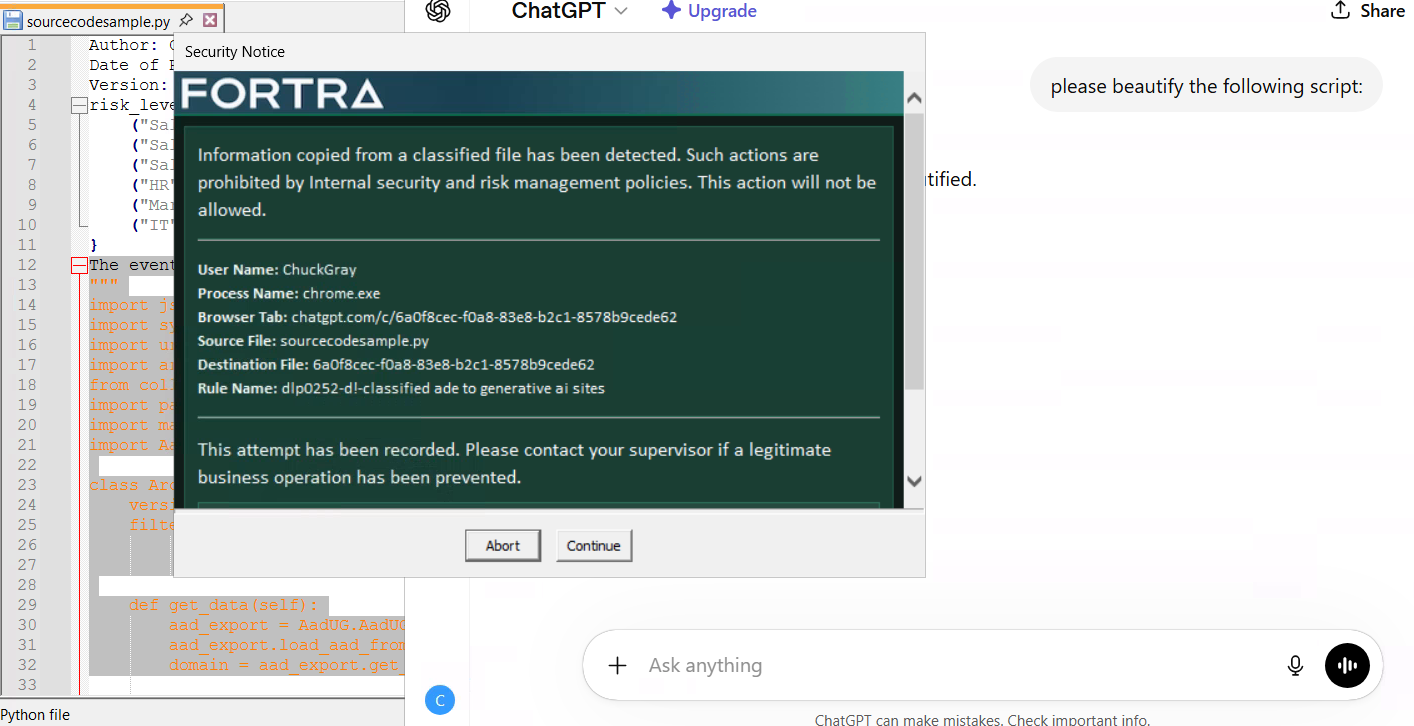

AI security requires an advanced system that can handle three interconnected challenges. With Fortra AI Data Loss Prevention (DLP), you control how your sensitive data is protected.

Flag Patterns

Monitor and block questions submitted to AI sites based on sensitive data patterns

Block Classified Data

Prevent copying and pasting of classified data.

Outright Block

Restrict all traffic to generative AI sites for maximum security.

Fortra Data Security: 20+ Years Protecting Critical Data

Gain complete clarity on your cloud data landscape with a 30-day Data Risk Assessment, powered by DSPM.

Fortra Data Security for AI

Fortra Data Security can block data egress via AI sites right out of the box with our Generative AI Content Pack.

This provides DLP solutions and reporting templates to allow users to use generative AI sites while preventing the egress of classified data via copy-and-paste, file upload, or form submission.

Our Generative AI Content Pack also includes a workspace within our Analytics and Reporting Cloud (ARC) to allow for reporting on key data points such as:

- Volumes of events by users

- Types of data egressed (PII, PCI, etc.)

- Most common file types being shared via AI sites

- Operation types such as copy-paste or file upload

- Top sites where egress is occurring

ARC dashboard offers insights on data egress and AI site activity.

Why "Free" AI Data Loss Prevention Isn’t Enough

So-called "free" solutions with hidden fees may seem cost-effective at first glance, but their limited capabilities often undercut efforts to protect against the complex risks of AI-driven data loss—that is, unless you pay up for extra features.

Read our datasheet to discover how our purpose-built AI DLP platform with predictable, transparent pricing and robust features is the best way to protect your data and budget.

We are at the leading edge of not only the use of AI Chatbots but understanding the shortcomings and implications. Since each company has their own tolerance for risk, tools need to provide the flexibility to allow a company to ease their way into these environments. As cases in the US court system have already shown these learning models do not discriminate, be it personal commentary or intellectual property, all data is fair game, and we need to protect what we own.

Director of Product Data Protection, Fortra

See How Fortra AI Data Security Protects Business

Request a personalized demo to explore our powerful, purpose-built AI data security solutions.